Machine Learning System Design interview

Machine learning interviews stopped being about writing correct answers a long time ago.

There was a time when software interviews felt bounded.

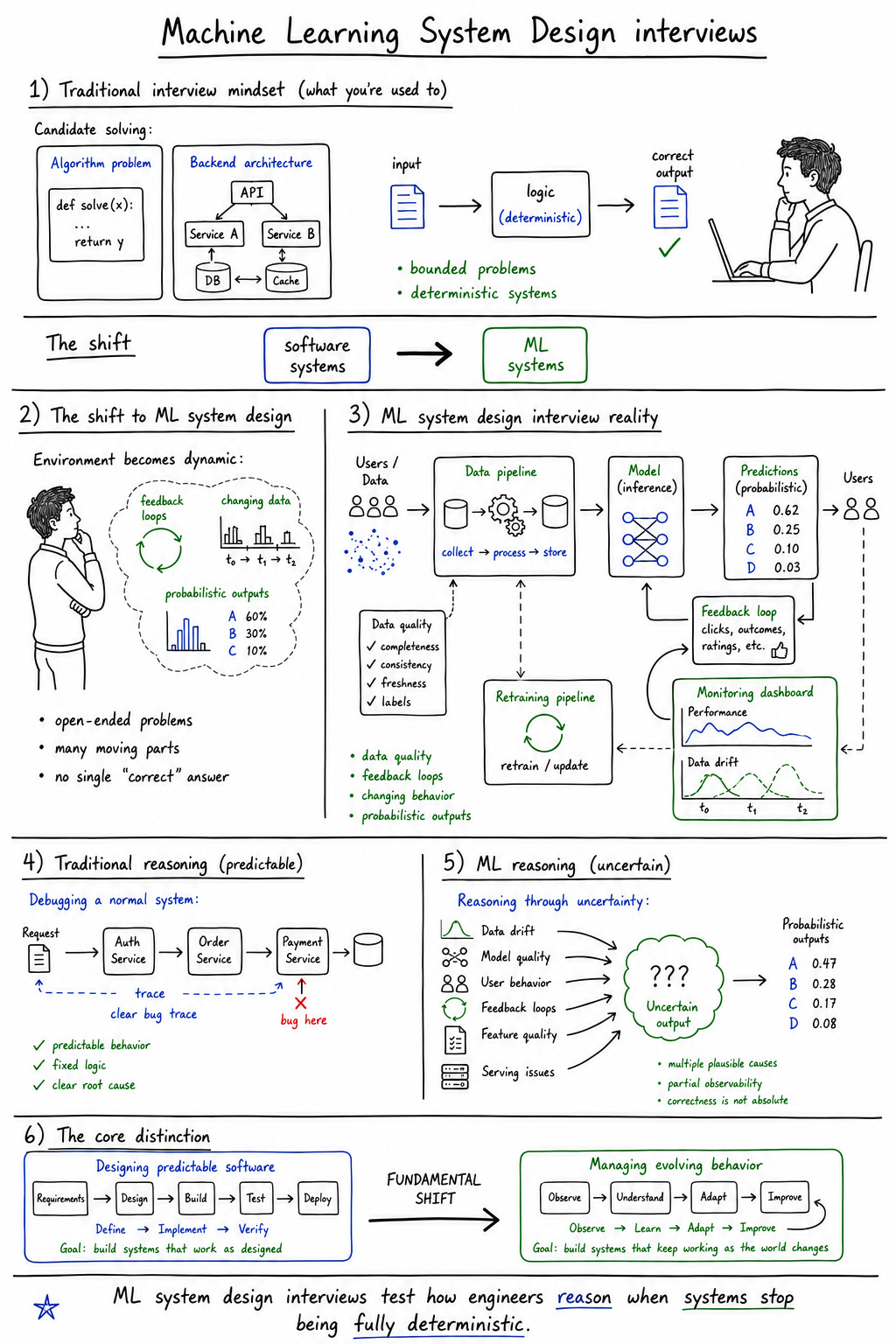

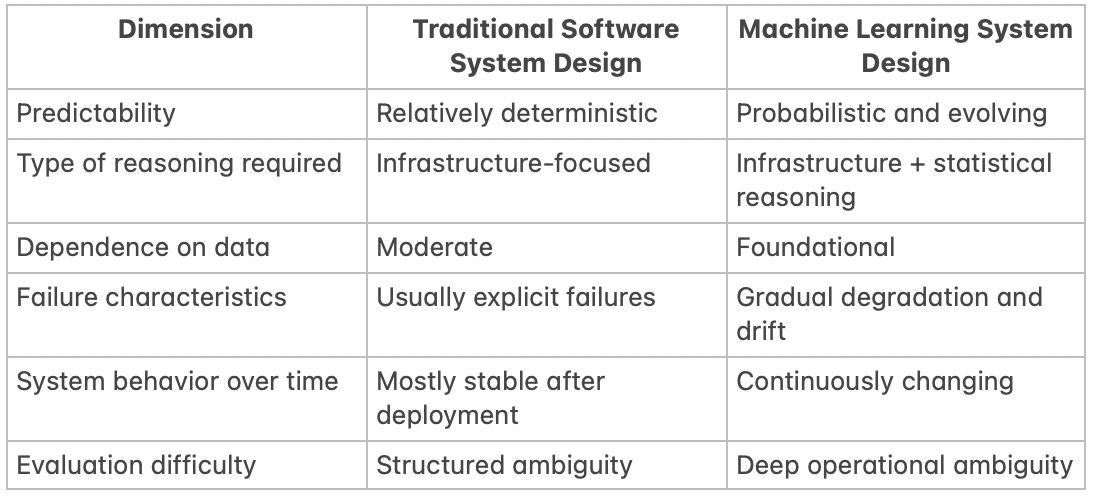

Not easy, necessarily, but bounded. You could disagree with the process, complain about algorithm puzzles, or question whether reversing binary trees reflected actual engineering work, but at least the evaluation itself felt relatively deterministic. Problems had constraints. Solutions had measurable correctness. Even system design interviews, for all their ambiguity, generally revolved around infrastructure that behaved predictably once designed correctly.

Machine learning system design interviews changed that dynamic completely.

Suddenly candidates weren’t just being asked to reason about distributed systems or scalability. They were being asked to reason about systems that evolve over time, systems whose outputs are probabilistic rather than deterministic, systems heavily shaped by data quality, operational feedback loops, and shifting real-world behavior. The interview stopped being about designing software in the traditional sense and became something closer to reasoning through uncertainty under pressure.

And that shift feels deeply connected to how software engineering itself changed between 2023 and 2026.

Machine learning interviews stopped being about writing correct answers a long time ago. Now they’re about reasoning through systems nobody fully controls.

That distinction matters because ML system design interviews are not merely harder versions of backend interviews. They represent a fundamentally different model of technical evaluation. They test whether engineers can think coherently in environments where correctness itself becomes slippery.

And increasingly, that’s what modern software systems actually look like.

Why ML systems feel fundamentally different

Traditional software systems are built around deterministic assumptions. Inputs lead to predictable outputs. Logic behaves consistently. Bugs can usually be reproduced. Failures often originate from explicit implementation errors or infrastructure breakdowns.

Machine learning systems violate those expectations constantly.

The model itself introduces probabilistic behavior into the architecture. Two identical inputs may produce slightly different outputs depending on context, model state, or inference conditions. Performance degrades gradually rather than catastrophically. Drift accumulates quietly. Feedback loops alter future behavior. Data distributions evolve independently of the application logic surrounding them.

That fundamentally changes how engineers reason about systems.

In ordinary backend development, the infrastructure primarily exists to preserve correctness. In ML systems, infrastructure exists partly to manage uncertainty itself. Monitoring systems track statistical behavior rather than explicit failures. Evaluation pipelines measure degradation probabilistically. Retraining workflows become operational necessities because the system’s environment changes continuously.

This is exactly why ML system design interviews feel cognitively heavier than traditional system design discussions. Candidates are not merely optimizing architectures. They are reasoning about systems whose behavior remains partially unstable even after deployment.

And unlike conventional distributed systems, many ML systems continue evolving after release.

The shift from coding problems to systems thinking

Part of what changed over the last several years is that implementation itself stopped being the primary bottleneck for many engineering organizations.

Code generation tooling improved. Infrastructure abstractions matured. Cloud orchestration became more standardized. AI-assisted development accelerated ordinary implementation work. As a result, interviews gradually shifted away from evaluating whether candidates could write and learn code quickly and toward evaluating whether they could reason through increasingly complex systems.

Machine learning accelerated that transition dramatically.

Modern ML system design interviews rarely focus deeply on implementation syntax because implementation is no longer the interesting part. The difficult part is understanding interactions between components, constraints, trade-offs, operational realities, and evolving behavior over time.

Candidates are expected to think in layers simultaneously:

Infrastructure behavior

Model performance

Data quality

Cost constraints

Real-time latency requirements

Long-term system evolution

That’s a very different cognitive exercise than solving isolated coding problems.

And in many ways, these interviews mirror what engineering roles themselves became after the AI boom. Software systems are no longer static products with deterministic workflows. Increasingly, they are adaptive systems driven by probabilistic models operating inside distributed infrastructure under changing environmental conditions.

The interviews simply inherited that complexity.

The hidden complexity behind “design an ML system”

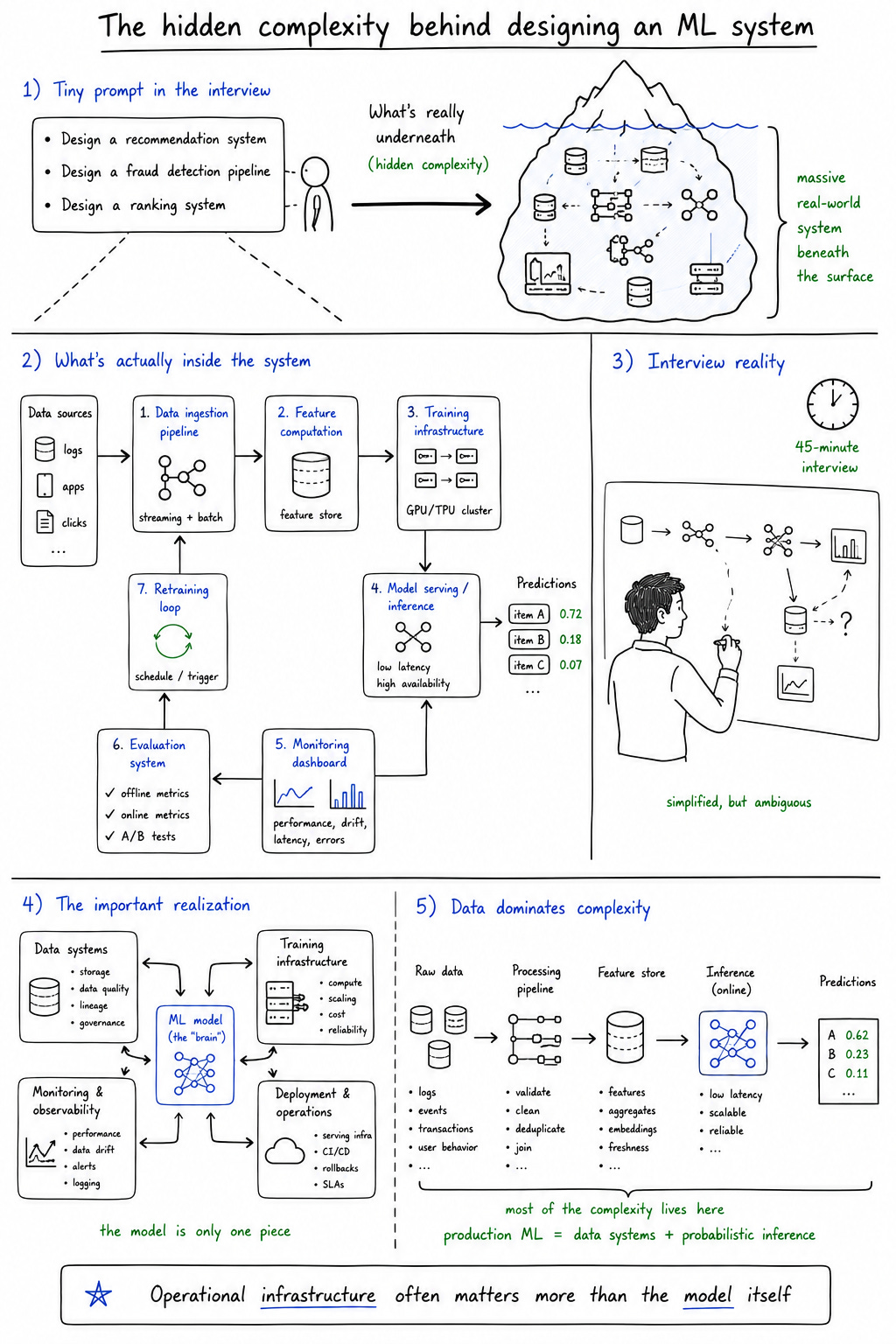

One of the stranger aspects of ML system design interviews is how casually enormous complexity gets compressed into tiny prompts.

“Design a recommendation system.”

“Design a fraud detection pipeline.”

“Design a real-time ranking system.”

These questions sound deceptively manageable because interview framing abstracts away the operational reality underneath. But every one of these systems contains enormous hidden complexity that real engineering organizations spend years managing.

The candidate is expected to reason through data ingestion pipelines, training infrastructure, feature computation, inference orchestration, monitoring, retraining workflows, evaluation systems, and scalability concerns, often within forty-five minutes.

Which is simultaneously unrealistic and revealing.

The interview environment simplifies reality aggressively while still exposing how candidates think about interconnected systems. The simplification is artificial, but the ambiguity is real.

The model is only one piece

Infrastructure decisions shape outcomes

Data quality quietly controls everything

That last point especially tends to destabilize candidates because many engineers approach ML interviews assuming the “AI” portion is the center of the system. In practice, operational infrastructure often dominates the actual engineering complexity.

Production ML systems are mostly data systems wrapped around probabilistic inference layers.

And the interviews increasingly reflect that reality.

Ambiguity becomes the actual interview

What makes these interviews psychologically difficult is not necessarily the scale of the systems themselves. It’s the absence of stable boundaries.

Traditional coding interviews feel constrained even when difficult. There is usually a defined problem space, a measurable goal, and some implicit understanding of correctness. ML system design interviews intentionally remove those stabilizing structures.

Constraints remain incomplete. Requirements stay underspecified. Trade-offs emerge dynamically during discussion. Sometimes the interviewer changes assumptions halfway through intentionally to see how candidates adapt.

At first, many engineers interpret this as poor interview structure.

Then eventually they realize the ambiguity is the structure.

Most candidates aren’t uncomfortable because the systems are large. They’re uncomfortable because nobody tells them what the “right” answer is.

That discomfort exposes something important about modern engineering work generally. Real ML systems rarely arrive with perfectly defined requirements. Teams operate under uncertainty constantly. Data behaves unpredictably. Metrics conflict. Latency requirements compete against model quality. Infrastructure budgets constrain experimentation.

The interview simply simulates that ambiguity in compressed form.

And many candidates struggle not because they lack technical knowledge, but because ambiguity itself creates cognitive pressure. They search for correctness when the real evaluation revolves around reasoning quality under uncertainty.

Why these interviews exploded after the AI boom

Between 2023 and 2026, hiring expectations changed dramatically.

Before the generative AI explosion, machine learning expertise remained relatively specialized. Most backend engineers could operate successfully without deep familiarity with model behavior, embeddings, inference infrastructure, or retrieval systems.

That separation collapsed quickly.

AI-native products emerged across nearly every category of software. Recommendation systems became more sophisticated. Search evolved into semantic retrieval. Copilots entered development workflows. LLM integrations spread into ordinary SaaS products. Suddenly ML awareness stopped being isolated to dedicated ML teams.

Companies responded predictably: interview processes evolved to evaluate broader systems thinking around machine learning infrastructure.

And importantly, these interviews expanded beyond pure ML engineering roles.

Backend engineers increasingly encountered ML-flavored infrastructure discussions. Platform engineers needed awareness of inference scaling and vector databases. Full-stack roles started including conversations about recommendation systems or retrieval pipelines.

Machine learning stopped being a specialization and became part of general systems literacy.

That shift fundamentally expanded the role of ML system design interviews inside technical hiring.

The widening expectations placed on engineers

One reason these interviews feel overwhelming is that they quietly combine multiple disciplines simultaneously.

Candidates are increasingly expected to reason across:

Machine learning workflows

Infrastructure scalability

Observability and monitoring

Operational trade-offs

Ten years ago, these domains were often handled by relatively separate teams. Today they increasingly overlap inside production systems.

Modern engineers are expected to understand not only how software behaves, but how systems evolve statistically over time. They must think about infrastructure and models simultaneously. They must reason about operational cost alongside predictive quality.

That interdisciplinary expansion changed the emotional texture of technical interviews. The candidate is no longer evaluated solely as a programmer. They are evaluated as a systems thinker operating across unstable domains.

And honestly, that’s exhausting.

Interviews as simulations of operational thinking

The more ML system design interviews evolved, the less they resembled examinations of technical memorization and the more they resembled compressed simulations of operational reasoning.

The strongest candidates are rarely the ones who recite the most patterns mechanically. They are usually the ones who reason calmly through uncertainty, identify important trade-offs clearly, and prioritize constraints coherently.

This is partly why these interviews frustrate people coming from heavily preparation-driven environments. Memorization helps only up to a point because the actual evaluation revolves around dynamic reasoning.

Operational thinking matters more than architectural recall.

Can the candidate identify bottlenecks?

Can they reason about system degradation over time?

Can they explain trade-offs coherently?

Can they prioritize imperfect decisions under incomplete constraints? Those are fundamentally operational questions.

And increasingly, that’s what engineering organizations actually care about.

The reason ML interviews feel more cognitively demanding is not merely that they are “harder.” It’s that they operate across multiple unstable dimensions simultaneously.

Traditional systems can often be evaluated based on correctness and scalability. ML systems require reasoning about behavior that remains partially uncertain even after deployment.

The candidate is effectively being asked to design infrastructure around evolving probabilistic behavior under operational constraints.

That’s a fundamentally different category of systems thinking.

Trade-offs sit at the center of everything

Nearly every meaningful ML system discussion eventually collapses into trade-offs.

Accuracy vs latency

Scalability vs operational cost

Model quality vs infrastructure simplicity

Automation vs human oversight

The difficult part is that none of these tensions resolve cleanly.

Higher-quality models often increase inference cost and latency. More aggressive automation introduces monitoring complexity. Better personalization increases privacy concerns. Real-time retraining improves adaptability but destabilizes infrastructure predictability.

And the interviews intentionally surface these tensions because the trade-offs themselves reveal how candidates think.

There is rarely a perfect architecture.

There are only architectures optimized around particular constraints.

The uncomfortable reality of interview preparation

One reason candidates often feel perpetually unprepared for ML system design interviews is that preparation material and actual reasoning are not the same thing.

Courses, tutorials, and structured examples create familiarity. They expose patterns. They help candidates recognize components and workflows. But recognition creates a dangerous illusion because it feels very similar to understanding at first.

Then the actual interview starts.

The prompt shifts slightly outside rehearsed examples. Constraints remain unclear. The discussion evolves unpredictably. Suddenly the candidate realizes they understood the diagrams more than the systems themselves.

This is partly why so many engineers describe ML interview preparation as strangely unsatisfying. The more material they consume, the more they realize how much reasoning still feels unstable underneath.

The gap between recognition and operational intuition becomes painfully visible under pressure.

Misconceptions about ML system design interviews

Several misconceptions continue distorting how people approach these interviews.

“There’s a correct architecture.”

Usually there isn’t. Most systems involve competing trade-offs rather than objectively optimal designs.

“Better models solve everything.”

Infrastructure, data quality, observability, and operational constraints often dominate production outcomes far more than marginal model improvements.

“Memorizing patterns is enough.”

Patterns help establish vocabulary. They do not replace reasoning under uncertainty.

“These interviews only matter for ML engineers.”

Increasingly false. ML awareness now extends across backend, infrastructure, platform, and product engineering roles.

These misconceptions persist partly because people still approach ML interviews using mental models inherited from older software interview culture. But the underlying systems changed faster than the interview expectations surrounding them.

What these interviews reveal about the industry

In many ways, ML system design interviews reveal more about the software industry itself than about individual candidates.

They reflect an industry increasingly dependent on systems that behave probabilistically, evolve continuously, and operate under unstable real-world conditions. They reveal how software engineering itself shifted away from deterministic implementation toward adaptive infrastructure thinking.

And perhaps most importantly, they expose how uncertainty became normalized inside technical work.

The industry isn’t asking engineers to know everything. It’s asking whether they can think clearly while surrounded by uncertainty.

That distinction matters because many candidates interpret ambiguity as evidence of personal inadequacy when in reality the ambiguity reflects the systems themselves.

Modern engineering increasingly revolves around incomplete information, probabilistic behavior, operational trade-offs, and evolving infrastructure constraints.

The interviews merely mirror that environment.

The future of ML system design interviews

It’s difficult to predict exactly how these interviews evolve over the next several years, especially as AI tooling increasingly automates portions of implementation work.

But one trend seems relatively clear: reasoning will probably matter more, not less.

As copilots improve and infrastructure abstractions mature further, implementation details become easier to outsource to tooling. The difficult part remains deciding what systems should optimize for, how constraints interact, and how trade-offs should be prioritized.

In other words, operational reasoning becomes the durable skill.

Ironically, the more AI assists ordinary development, the more interviews may shift toward evaluating the uniquely human aspects of systems thinking: judgment, prioritization, ambiguity management, and trade-off reasoning.

Not because humans are irreplaceable abstractly, but because modern systems themselves remain operationally messy.

And messy systems still require coherent reasoning.

Conclusion: interviews as reflections of modern engineering complexity

Machine learning system design interviews feel difficult because they are evaluating something much larger than technical recall.

They are evaluating whether engineers can reason through evolving systems operating under uncertainty, conflicting constraints, imperfect data, and long-term operational pressure. They reflect an industry increasingly shaped by probabilistic infrastructure rather than deterministic software alone.

And perhaps that’s why these interviews feel psychologically different from older technical evaluations.

They don’t just test knowledge.

They test whether candidates can remain intellectually coherent inside environments where certainty itself becomes unstable.

That, more than anything else, may define modern software engineering now.