How to go from "I don't get AI" to building with it

+ 3 key concepts for a strong foundation.

A lot of developers have tried ChatGPT or Copilot. But when it comes to actually building something real, they’re not confident. If that’s you, you’re not alone.

…but you are falling behind. LLMs are here to stay, and if you don’t learn how to build with them fast, the next few years will leave you in the dust.

To help you catch up, I’ll spend most of my efforts through the new year to address the AI topics that readers have requested the most: from RAG through 2026 must-have tools. But today I’m kicking it off with an overview of what makes building with AI fundamentally different.

LLMs have transformed our development model.

In the 2010s, APIs became our go-to for connecting with third-party services. Building something like Uber didn’t mean reinventing the wheel for every feature—you stitched together services like Google Maps for directions and Stripe for payments. The API model was reliable and boring (in a good way).

But when you use LLMs via API, the output isn’t always precise.

Take the classic GPT 4o mistake: misspelling “strawberry” with three R’s:

You’d never expect this kind of mistake from a legacy API or SaaS platform (imagine if Google Maps guessed a location with 70% confidence). But with LLMs, this uncertainty is baked in.

Still, that’s not stopping any companies from adopting AI. 92% of companies plan to invest more in AI over the next three years. And if you don’t learn the skills to work with AI reliably, you’ll be competing in an AI-hungry market against developers who have.1

Grokking AI is all about grasping how to work with its unpredictability.

To help you get there, I’ll cover this today:

3 LLM components that drive unpredictability under the hood

The skills you need to build resiliently with AI

Let’s get started.

3 components that make LLMs powerful (and weird)

The unpredictability you see in LLMs is a direct consequence of how they work under the hood. Let’s talk about how these 3 components contribute to that.

1. Transformers

At the heart of every LLM is the transformer — a neural network architecture built around a mechanism called attention. Attention lets the model weigh which parts of the input matter most when making a prediction.

Unlike older models that process text word by word, transformers read the entire input (called the context window) at once. Then, they predict what comes next based on the patterns they’ve seen before.

This means that transformers don’t understand meaning. Rather, they extend patterns. You’re getting what the model thinks is a likely continuation of the input, but not necessarily a logical result.

This is what causes weird behavior:

The same input can produce different outputs, depending on the surrounding context.

Minor prompt changes can cause big differences in completions.

The model isn’t “following instructions” — it’s guessing what looks right next.

2. Tokenization & Sampling

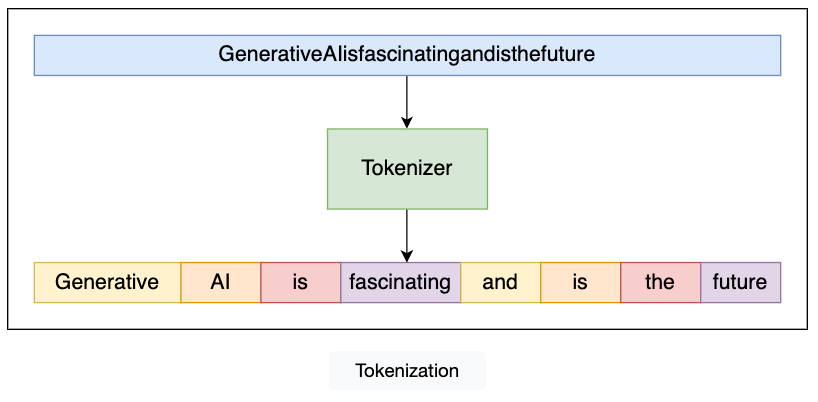

Before an LLM can generate output, it first breaks down the input text into tokens — small chunks of text that may be whole words, word fragments, or even just characters — in a process called tokenization.

For example, the word unpredictability might be split into several tokens like un, predict, and ability. Rather than seeing sentences, the model sees token sequences.

To generate output, the model generates a probability distribution over its vocabulary for the next token. This is called sampling: picking the next token at random, weighted by its probability.

That randomness is what makes LLMs feel creative — but it also makes them unpredictable:

Change the temperature, and you change how random the output is.

Use different sampling strategies (like top-k or nucleus sampling), and the model may complete the same prompt in very different ways.

Even punctuation or emoji can split into multiple tokens and skew the results.

While you can force consistency by setting the temperature to zero, doing that often kills the flexibility and fluency that make LLMs useful in the first place.

3. Training Signals

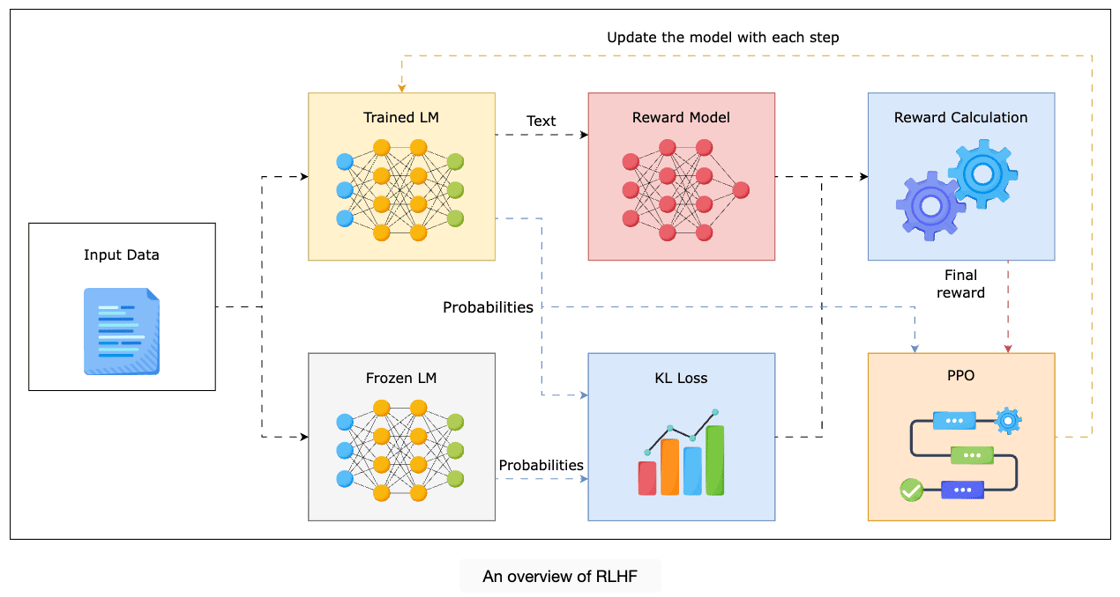

Most LLMs are trained in two big phases:

Pretraining: The model learns language by predicting the next token on massive text datasets.

Fine-tuning: Humans step in and say “this output was helpful,” using techniques like Reinforcement Learning from Human Feedback (RLHF) to make the model more aligned with human preferences.

This fine-tuning and RLHF process makes a model more polite, friendly, and cooperative. But it also introduces some major issues:

It’s rewarded for sounding helpful, not for being factually correct.

It might confidently hallucinate an answer instead of saying “I don’t know.”

Sometimes it’ll refuse to answer a valid query to avoid “sounding risky.”

This means you’re not just debugging your code anymore — you’re also debugging the ghost of every human preference that got baked into the training loop. Fun.

AI skills to mitigate uncertainty

You can’t change how LLMs work, but you can still build reliably with them if you learn skills and strategies that help mitigate their pitfalls.

The most in-demand AI skills are a direct response to the quirks we unpacked:

Prompt engineering: Steers transformer behavior by reducing ambiguity and guiding attention.

Retrieval-Augmented Generation (RAG): Injects real-world context to override hallucinations from pretraining or RLHF.

Output validation and filtering: Catches bad outputs caused by token sampling randomness or training misalignment.

Tool use and orchestration: Delegates tasks the model is bad at (math, APIs, logic) to more reliable systems.

System Design for failure: Assumes the model will mess up and builds in retries, backups, and fallbacks.

Evaluation strategies: Measures performance when “correctness” is fuzzy or probabilistic.

If you’ve heard of these skills but didn’t understand why they’re useful, you do now.

Reducing uncertainty in LLMs (and your career)

The unpredictability in LLM outputs is a natural side effect of their architecture. If you can embrace uncertainty instead of fighting it, you’ll be ahead of the developers still treating LLMs like APIs.

Start now, with the skills that matter most:

Learn prompt engineering to steer model behavior.

Add basic validation to catch bad outputs before they reach users.

Use RAG to ground your model in facts when accuracy matters.

Bring in tools and fallback logic as your system grows.

And when you’re deploying to real users, track what’s working with evaluation.

You don’t need to master all of this overnight. But if you want to build with AI, now’s the time to move. The LLM wave isn’t slowing down — and waiting until the tooling is “mature” or the models are “perfect” is a gamble you will lose.

To keep learning:

You can get hands-on experience with everything from prompting to RAG to fine-tuning to building agents in our comprehensive Skill Path: Become an LLM Engineer.

If you’re ready for more advanced AI skills, explore our entire catalog of Generative AI resources.

For a limited time, you can access these courses and more at a 50% discount (or more) with our Early Bird Black Friday Sale.

Over the next few weeks, we’ll discuss some of the applied techniques you need to engineer successful AI systems, including:

How to set up your first RAG pipeline

How to create reliable agentic systems.

Designing for failure with AI System Design

Until then, do you have any burning questions about AI and software development? Leave a comment and let me know.

Happy learning!

— Fahim

This article comes at the perfect time, especially with the accelerating pace of AI integration into everyday development workflows. I particularly appreciate your insight that "this uncertainty is baked in" with LLMs, as it truly encapsulates the fundamental paradigm shift from predictable API interactions to a more probabilistic development approach that requires different validation strategies.