Here's your AI agents starter kit

Now the real fun begins.

Last week, we grounded our models with RAG to stop the hallucinations.

Now, it’s time to let the machine do the work. We’re continuing our AI series by moving from smart answers to autonomous action.

The next frontier in AI development is less about making LLMs ‘smarter’, and more about increasing their ability to act autonomously.

This is where Agentic AI comes in.

But to fully understand AI Agents, we need to compare them to their predecessors:

Traditional LLMs: These are specialized tools trained for specific tasks (like summarizing text). They’re good at many things, but limited in adapting to unforeseen scenarios.

Compound Systems (like RAG): As we learned last week, compound systems connect multiple components (LLMs, databases, search tools) to solve bigger problems. However, they typically follow a fixed, predefined sequence of operations.

AI Agents: Now for the really fun part. These are advanced compound systems with autonomy. Instead of following a rigid script, an Agent leverages the reasoning capabilities of a foundation model to plan, adapt, and choose its own tools to achieve a goal.

With that out of the way (cheers, Vince), let’s get to building.

Understanding the Agentic Workflow

AI agents have three key capabilities: reasoning, acting, and memory.

These capabilities enable AI agents to handle complex tasks by breaking them down into manageable steps, utilizing external tools, and leveraging stored information for personalized interactions.

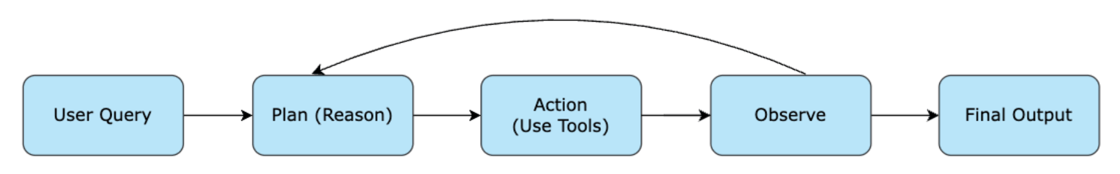

The agentic workflow can be visualized in the following diagram:

In the above diagram, the process starts with a User Query, which is the initial question or problem posed by the user.

The AI agent then proceeds to Plan (Reason), formulating a step-by-step strategy to address the query.

The agent then moves to Action (Use Tools), utilizing various external tools such as search engines or calculators to gather necessary information and perform tasks.

The Observe step involves the agent reviewing the results of its actions, and if needed, it may adjust its plan accordingly, looping back to the reasoning stage.

Finally, the process concludes with the Final Output, where the agent provides a comprehensive and tailored response to the user.

How Most Devs Are Building Today

With so many ways to build — OpenAI’s new Agents API, LangChain, LlamaIndex, CrewAI, AutoGen, and a growing list of lightweight libraries — it can be hard to know where to begin. Every framework advertises a different philosophy, a different abstraction layer, and a different idea of what an “agent” even is.

But when you strip away the branding and look at how developers actually get agents working in the real world, the pattern is surprisingly consistent. No matter which framework you choose, the first successful agent almost always boils down to the same simple setup: one model, one tool, one loop.

Everything else is just structure layered on top.

To keep things (relatively) simple, we’ll be working in python. Let’s begin.

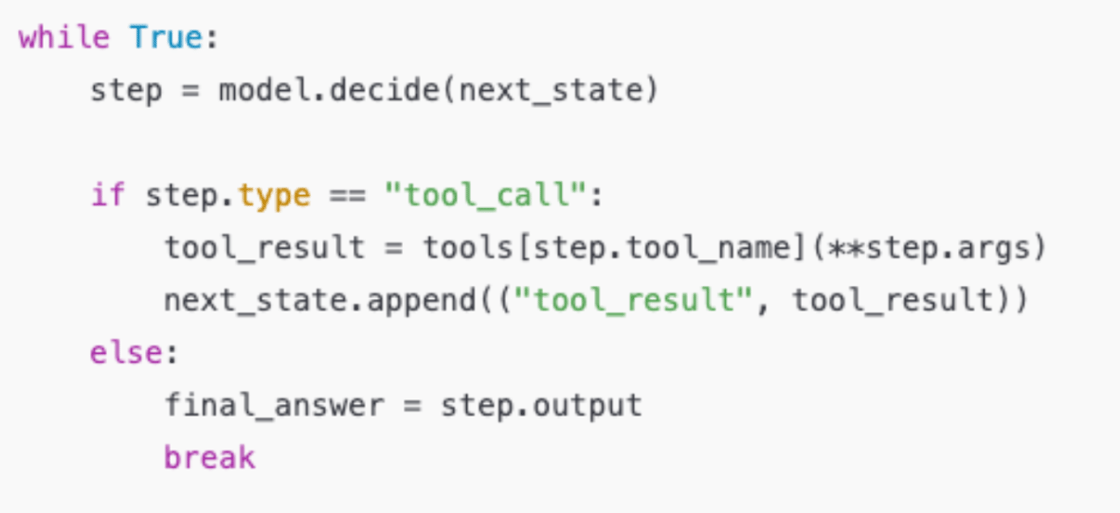

1. Start With a Single-Agent Loop

The simplest agent you can build is:

An LLM (GPT, Claude, Llama — pick your poison)

A small set of tools (like an API)

Instructions (‘don’t screw this up for me’)

A loop that lets it keep thinking and acting until it’s done

Every framework (LangChain, CrewAI, OpenAI’s Agents SDK) is doing the same thing underneath:

If you can build this, you can build almost any agent system.

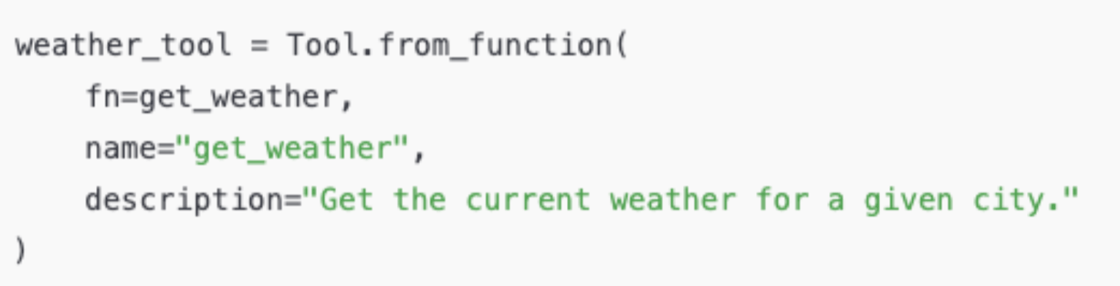

2. Give It One Useful Tool

Early wins usually come from one reliable tool. For example, a web search or internal API call.

Then you register that function as a tool the agent can call:

With just this, your agent can stop guessing and start doing something concrete.

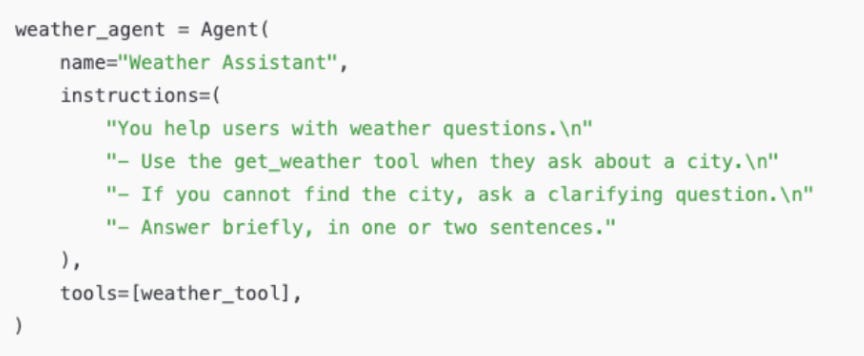

3. Add Tight Instructions (Not a Vague Prompt)

A good agent behaves more predictably when its instructions are specific.

That’s all you need for a very capable first agent: one tool, clear rules, and a loop.

Once developers get their first agent running, the next layer is all about reliability: testing the workflow against real inputs, validating tool outputs, and adding lightweight guardrails so the agent doesn’t loop forever or take the wrong action.

These steps aren’t wildly complicated, but they separate a fun demo from something you can trust in production.

Connecting Systems: The Model Context Protocol (MCP)

As agent systems grow, they need a reliable way to talk to your tools, data sources, and internal APIs. Right now, every service speaks its own language — one for calendars, one for file systems, one for search, one for business apps. That quickly turns into a pile of brittle, custom integrations.

MCP (Model Context Protocol) addresses this by giving AI agents a standard way to connect to external systems.

You can think of it as a universal adapter:

Your model runs inside an MCP host.

It communicates through an MCP client.

Tools and data sources sit behind MCP servers.

Once a service exposes an MCP server, any compatible AI agent can use it without bespoke glue code. That keeps your system modular, reusable, and easier to scale.

MCP defines three simple primitives:

Tools: actions the agent can take

Resources: read-only data the agent can fetch

Prompts: reusable instruction templates hosted on the server

It’s still early, but MCP is already becoming the foundation for building larger, more interoperable agent systems. You’ll start seeing it show up everywhere: IDEs, assistants, multi-agent frameworks, and developer tooling.

Get Started With Agents Today

The transition from a smart prompt to an autonomous Agent is the biggest leap in modern AI engineering. If you can master Agents, you can build systems that truly automate major business processes.

Here are some resources to help you get started:

👉 Still need to home in on the basics? Find your footing with Generative AI Essentials

👉 Ready to command your first digital assistant? Dive deeper into the multi-agent paradigm with Build AI Agents and Multi-Agent Systems with CrewAI.

👉 Orchestrating multiple agentic systems? Explore MCP Fundamentals for Building AI Agents to learn more about the Model Context Protocol, the new standard for connecting AI assistants to systems and data.

You can access these courses (and more) at Black Friday prices right now:

Next week, we’ll take a break from AI to dive deep into a BFCM case study. After that, we’ll return to our AI series with an actionable overview of AI System Design.

Until then, let me know if there are any other topics you’d like me to cover in the comments.

Happy learning!

- Fahim