AI is rewriting the rules of System Design

NVIDIA and OpenAI are placing their bets on agents... and you should be too.

Last week, NVIDIA threw billions at OpenAI.

Not for more GPUs or to train bigger models, but to go deeper into the layer where you work: the dev workflow.

Every chip NVIDIA sells depends on software adoption. And OpenAI isn’t just building models anymore… it’s building the interface layer for how software gets made.

And it’s not just them. GitHub’s Copilot Agents are starting to plan, write, test, and push code.

What’s happening is a paradigm shift before our eyes: agents are moving into the dev stack.

If you’re using OpenAI’s function calling, LangChain’s glue-code agent chains, or Replit’s ghost dev, you’re already feeling it — you’re not just writing code anymore. You’re orchestrating agents that do it for you.

And if you want to stay ahead, you’ll need to know how to design systems that can coordinate, observe, and trust these agents.

This emerging skillset is called Agentic System Design. And as essential as it is, it’s also not well understood. So today I’ll share exactly why you need this skill and how to get started.

What is Agentic System Design?

Agentic System Design is the practice of building systems where LLM-powered agents act as semi-autonomous actors. These agents are capable of perceiving context, planning tasks, and executing actions using tools, memory, and logic you define.

In traditional systems, you write step-by-step instructions. But in agentic systems, you set the goal — and the agent figures out how to achieve it.

This shift means designing not just the code paths, but the operating environment:

Which tools agents can access

How tasks are broken down and delegated

What happens when things go off the rails

How you observe, debug, and guide behavior

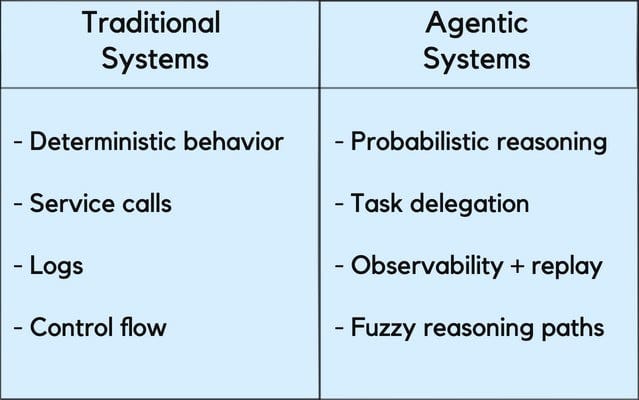

Here’s how that shift looks alongside traditional System Design:

With agentic systems, we have unique factors to consider:

Probabilistic reasoning means agents may respond differently to the same input, especially as context, memory, or prompt framing changes.

Task delegation hands the “how” over to the agent. You’re defining goals, not writing every step.

Observability and replay become critical because you can’t just read logs to understand what happened. You need session traces, intermediate thoughts, and decision records.

Fuzzy reasoning paths replace hard-coded logic. Agents explore solutions instead of following strict workflows — flexible, but harder to control.

If you’ve designed systems before, agents require a bit of a mindset shift. You’ll need to plan for variation, build for coordination, and design for oversight (but not complete control).

A read for added perspective: See how agentic systems compare to microservices in this free edition of the Educative Newsletter: Rethinking Microservices with the Rise of AI Agents.

Why it matters now

GitHub is turning Copilot into a platform for full task delegation.

OpenAI’s tools are evolving into interactive interfaces that collaborate with you.

...And it won’t stop there.

Soon, we’re going to see agents embedded across the entire dev workflow — from VS Code extensions that refactor and deploy code, to AWS shipping native orchestration frameworks, to Notion and Jira spinning up agents that coordinate between planning and production.

Companies are already hiring for the skills to enable this future.

Skills like agent orchestration, tool integration, memory management, and fallback logic are quickly becoming core responsibilities on LLM infra, platform, and applied AI teams.

Just as cloud-native architecture redefined backend engineering, Agentic System Design is now shaping the next generation of software roles.

Developers who learn to work with agents will be leading the future (or risk being left behind).

Key skills for the agentic future

If you want to design with agents, here’s the skillset to make it real:

1. Agent Orchestration & Workflow Design

This is how agents go from one-off tasks to complex systems.

Learn: LangGraph, CrewAI, AutoGen

Try: Build a multi-step agent that receives a goal and delegates subtasks (e.g. “Write, test, and deploy an endpoint”)

Tip: Use LangGraph to visualize branching logic and recovery paths

👉 Also look into:

Delegation strategies

Multi-agent collaboration (e.g., Autogen’s group chat)

Role-based task routing (e.g., frontend vs. QA agents)

2. Tool Access, Trust, and Control

You’re giving agents real capabilities — make sure they use them safely.

Learn: OpenAI function calling, LangChain agent tool interfaces, AutoGen tools

Try: Give agents scoped access to APIs like file I/O, shell commands, or web search

Tip: Wrap tools with permission checks and fallback logic

👉 Also look into:

Sandboxing via Docker or subprocess isolation

Fallback patterns for safe failure and retry

Human-in-the-loop guardrails for critical actions (e.g. PRs)

3. Memory, Context, and State

Stateless agents are fun. Stateful agents are useful.

Learn: LangChain’s ConversationBufferMemory, Redis-based chat history, or custom memory stores

Try: Build an agent that remembers style preferences or recent decisions across sessions

Tip: Start with JSON + UUIDs, then scale to persistent stores

👉 Also look into:

Task-specific memory (e.g., short-term context)

Identity/state tracking across long-lived agents

4. Observability & Reasoning Debugging

You can’t fix what you can’t see (especially when your system is making up its own mind).

Learn: LangSmith, OpenAI logs, custom tracing formats

Try: Record every agent action with inputs, outputs, and timestamps

Tip: Build a “flight recorder” — then replay weird behavior to find what broke

👉 Also look into:

Visualizing reasoning trees

Dashboarding and alerting for agent failure modes

Metrics for evaluating agent alignment and reliability

Upskill before this goes mainstream

The future of development is changing fast. But you still have time to get ahead by learning how to design agentic systems.

If you’re ready to dig deeper, our Agentic System Design course just got a major update — including in-depth case studies on including ChainBuddy for workflow automation, the WebVoyager multimodal web agent, and MuLan for multi-object diffusion.

After learning foundations, you’ll do hands-on projects and case studies to learn how to:

Design self-improving multimodal web agents

Build autonomous LLM evaluation pipelines

Architect agentic systems for precise, multi-object image generation

Check it out and let me know what you think.

Any other questions about the future of software development? Hit reply and let me know.

Happy learning!

- Fahim