5 System Design lesson from Prime Day

Here's Amazon's playbook for navigating peak load.

I once interviewed a senior engineer who, when asked how they’d design a high-throughput checkout system, opened with:

“Let’s treat this like Prime Day.”

That one sentence told me everything.

He wasn’t thinking in abstract terms. He was thinking in real traffic, real failure, real-world tradeoffs.

That’s what separates a good engineer from a great one.

Even after its longest Prime Day ever last July, Amazon still managed 4+ days of peak load without a major hiccup. That takes months of prep, simulation, and fail-safe architecture design.

But you don’t need to run a hyperscaler to use the same playbook.

Whether you’re scaling a weekend side project, a SaaS app for 500 users, or prepping for your first big launch, these strategies still apply.

With Prime Day coming up this week, I wanted to share 5 battle-tested strategies Amazon uses that you can adopt to make your architecture more scalable and resilient (no matter your scale or budget).

Let’s get into it.

1. Scale smart (or watch your infra budget explode)

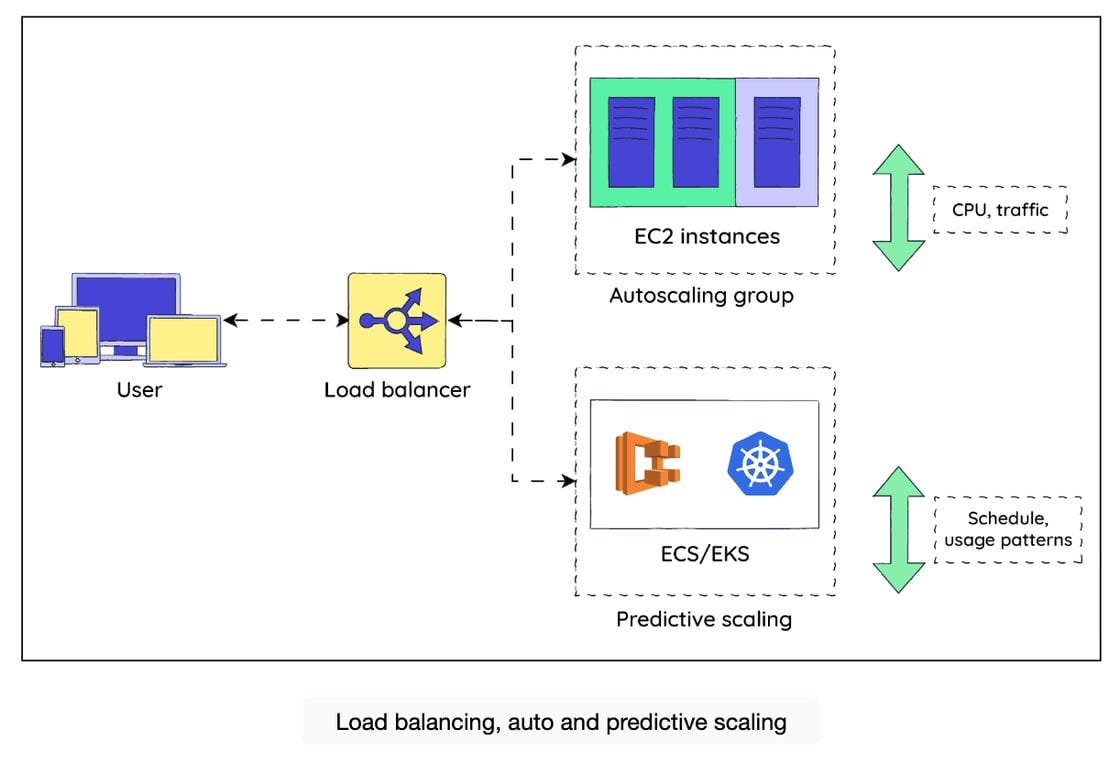

Yes, autoscaling is table stakes. But Prime Day-level scaling isn’t just “turn up the number of EC2 instances.” It’s predictive, strategic, and layered.

Before Prime Day, Amazon systems are pre-scaled based on historical traffic patterns. They don’t wait for CPU usage to spike — they scale in anticipation of that spike.

Behind the scenes, Amazon leverages:

EC2 Auto Scaling, tuned to workload-specific thresholds

ECS and EKS Auto Scaling, to dynamically resize containerized services

Elastic Load Balancing (ELB), which smartly routes traffic only to healthy instances

Predictive Scaling, which crunches prior Prime Day data to avoid lag-based scaling decisions

If the system gets overwhelmed, lower-priority services quietly fade into the background — leaving critical paths like search, cart, and checkout fully operational.

How to borrow this mindset:

✅ Set up autoscaling by service, not just globally

✅ Pre-scale based on known peaks (email drop? Product Hunt launch?)

✅ Design services to degrade gracefully

2. Assume failure (and make it boring)

Amazon’s systems don’t survive Prime Day because they never fail. They survive because they’re built to fail well. And failure is inevitable in all systems, so you should do the same.

Amazon’s approach:

Amazon RDS Multi-AZ deployments provide instant failover for relational databases when an availability zone blinks out

DynamoDB Global Tables replicate data in real time across regions for low-latency reads and high-availability writes

Aurora uses synchronous replication within regions and asynchronous replication across regions to keep data safe and services running

DocumentDB multi-primary replication ensures NoSQL workloads can survive zonal failures without manual intervention

All of this is backed by failover tooling and health-aware routing that kicks in before a human even notices.

But you don’t need global failover to borrow this mindset. If you’ve got a read replica, a retry strategy, or a feature flag that disables third-party calls during downtime, you’re already thinking like Amazon.

How to borrow this mindset:

✅ Treat every dependency like it could fail silently — because eventually, it will

✅ Use replicas, retries, or even just backup services for critical paths

✅ Set up health checks that do more than ping; they should reflect business logic (”Can this instance actually serve traffic?”)

✅ Practice failover before you need it. (If you’ve never tested it, it doesn’t exist.)

3. Load test like it’s already on fire

Early at Educative, we shipped a big feature after “passing” all our load tests. Staging was green and rollout was smooth — until it wasn’t. Within hours, performance tanked.

The culprit? Our tests didn’t mirror real user behavior: concurrent sessions, I/O bursts, and unexpected global traffic spikes.

That failure forced a mindset shift. We started testing for failure, not just success.

Amazon gets this. That’s why they break things on purpose:

Pre-Prime Day load tests push critical systems far beyond expected traffic to expose bottlenecks and race conditions

AWS GameDay lets teams simulate failure scenarios — from service outages to dependency lag — and practice coordinated incident response

Latency injection and circuit breaker validation help ensure that degraded systems fail cleanly, not catastrophically

Teams even test for cost: will your autoscaling strategy bankrupt you if traffic goes 10x overnight?

How to borrow this mindset:

✅ Run synthetic traffic simulations that reflect real-world behavior, not just request-per-second benchmarks

✅ Test how services respond when dependencies fail, not just when they’re under load

✅ Validate your rollback and failover plans in staging (or chaos environments) with production-like traffic

✅ Measure incident response time like you’d measure latency or throughput — because it’s just as important

4. “Cache me if you can!”

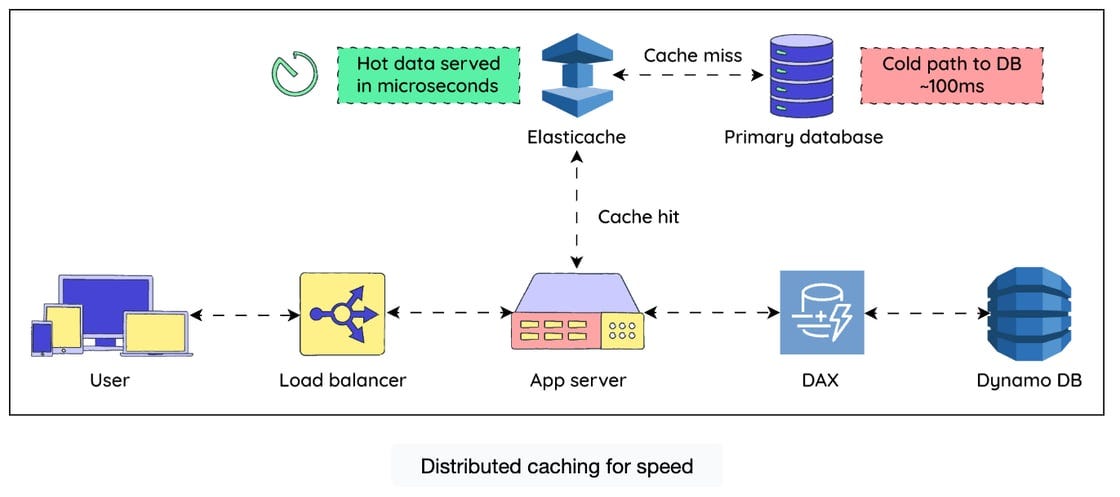

Caching takes heat off your databases and core services. You don’t need to be Amazon to benefit from that. Every time a service hits the database for something that could’ve been cached, a small part of your ops team dies inside.

During Prime Day, even a 100ms delay can cost Amazon millions.

So Amazon caches at multiple layers:

Amazon ElastiCache (Redis/Memcached) sits between services and databases, caching hot paths like product views, recommendations, and session state

Caches are deployed close to compute, reducing network hops and keeping latency low

CloudFront edges serve static assets (and even some dynamic responses) to users globally, minimizing round trips to origin servers

They also warm their caches before the surge, which is a detail often skipped in smaller teams — until the first user hits a cold cache and takes down the DB.

How to borrow this mindset:

✅ Use a CDN, even for some API endpoints (e.g. read-only product data)

✅ Set appropriate TTLs and refresh strategies

✅ Watch your cache hit ratios like you watch your prod dashboards.

5. Treat launch day like it’s an outage

Amazon treats Prime Day like it’s a planned disaster. They don’t guess what they’ll do if checkout starts failing — they’ve tested it.

Here’s what makes it click:

Live war rooms are spun up in advance, staffed with incident commanders, engineers, and real-time dashboards

CloudWatch collects metrics, logs, and alerts across services, tied directly to business health — not just CPU or memory

AWS Systems Manager automates diagnosis and remediation tasks across instances and services

EC2 Auto Recovery and AWS Health provide hands-off recovery for known failure types — saving critical time when the clock is ticking

But the most important ingredient? Everyone already knows what to do. They’ve practiced it.

How to borrow this mindset:

✅ Assign clear roles and escalation paths before you ship

✅ Align monitoring with business outcomes (e.g. checkout success rate, not just 200 OKs)

✅ Automate the obvious stuff (like restarting crashed processes or draining bad nodes)

✅ Run a simulated fire drill the week before. (If it’s a big enough deal to email your customers about, it’s worth rehearsing.)

If you build the process before you need it, your team won’t be scrambling mid-outage. They’ll just be executing the plan.

You don’t need a billion-dollar infra team to apply these

You just need to act like traffic spikes are part of your product lifecycle.

You will eventually have your own version of Prime Day traffic.

Maybe it’s a Black Friday sale, a feature launch, a PR moment, or a product demo that hits #1 on Hacker News.

You don’t get to choose when your traffic spike happens. But you can choose how ready you are.

Here’s the TL;DR playbook:

Scale smart, not just big

Cache everything you can (and then cache some more)

Expect failure and build for graceful degradation

Test under fire — not in ideal conditions

Prepare your team like launch day is outage day

Until then, bring these strategies to your System Design Interviews. I promise you they will make you a standout.

You can get hands-on experience with these strategies and more in some of our most popular System Design courses:

Grokking the Modern System Design Interview: Learn from FAANG systems, AWS outage case studies, and practice your skills in AI Mock Interviews

System Design Deep Dive: Real-World Distributed Systems: Learn to apply other battle-tested strategies to your designs, from real-world systems from Amazon to Google to Meta.

👉 You can access these courses and more with 50% off an Educative subscription now during Prime Week — offer ends October 12th.

Any other questions about System Design? Hit reply or leave a comment to let me know.

Happy learning!

- Fahim